KITE Aging Team presents talks from visiting international and University of Toronto experts in the area of mental health, aging and artificial intelligence.

View Options

Note: Filters will work only on the events currently shown on the page. When you load more events, filters will be reset to "All"

Accessibility Innovation Exchange (AIX) 2026

Canada’s accessibility innovation community is coming together and you should be in the room. This is a ticketed event, register here: https://events.humanitix.com/accessibility-innovation-exchange-2026 Join us for the Accessibility Innovation Exchange (AIX) 2026 at the KITE Research Institute in Toronto, where the future of accessibility, disability tech, and inclusive innovation comes together. Across Canada, some of the most influential organizations are funding and accelerating disability innovation, each focused on different sectors and stages of growth. These organizations are rarely all accessible in one space. AIX brings together Ontario Brain Institute, Centre for Aging + Brain Health Innovation, MaRS, UHN, KITE Research Institute, Praxis Spinal Cord Institute, CNIB, envisAGE, and AGEWELL (organizations subject to be confirmed), giving you a unique chance to understand how the ecosystem works, where you fit, and how to unlock multiple pathways for collaboration and scale.

Speaker: Dr. Martha Gulati, MD, MS, Professor of Cardiology at Houston Methodist and Davis Chair in Women’s Cardiovascular Health

Mark Rochon lecture: Women and Cardiovascular Disease: Is there really a sex difference?

We Walk UHNITED

We Walk UHNITED presented by Rogers isn’t just a walk. It’s an event to raise critical funds in support of UHN. When you raise money for We Walk UHNITED, you help pave the way for UHN to provide world-changing health care and make groundbreaking innovations. Join KITE's team at https://www.wewalkuhnited.ca/join/KITE.

Speaker: Crystal MacKay, Assistant Professor in the School of Rehabilitation Therapy at Queen’s University and an Affiliate Scientist at KITE

Research Rounds: Promoting Physical Activity in People with Lower Limb Amputations

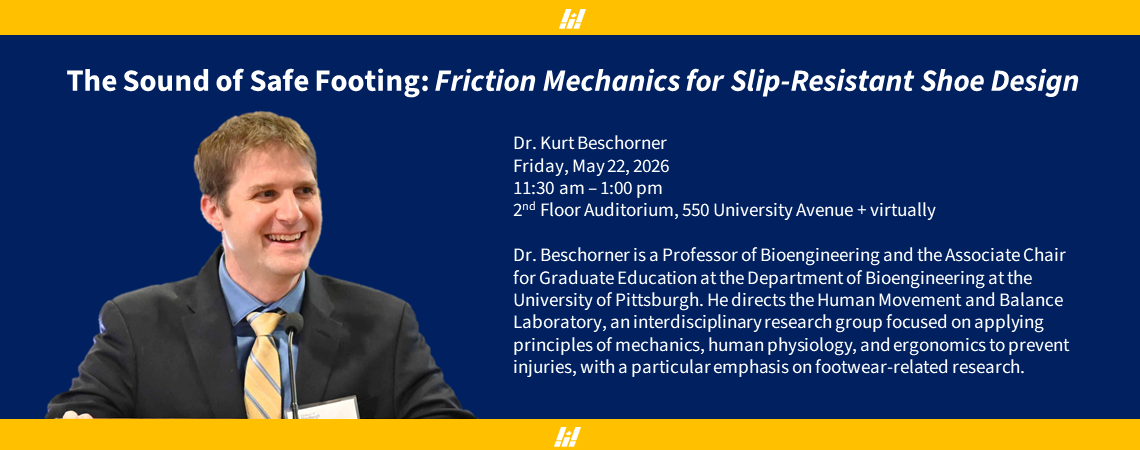

The Sound of Safe Footing: Friction Mechanics for Slip-Resistant Shoe Design

Dr. Beschorner is a Professor of Bioengineering and the Associate Chair for Graduate Education at the Department of Bioengineering at the University of Pittsburgh, presenting on The Sound of Safe Footing: Friction Mechanics for Slip-Resistant Shoe Design.

KITE Mother's Day Marketplace

Please join us for the Mother's Day Marketplace event at KITE. On May 7th from 10 am to 4pm, the KITE Innovations Gallery blossoms into a vibrant Mother’s Day Marketplace featuring a curated selection of artisan local vendors. Discover a beautiful array of handmade crafts, build-your-own bouquets, chic jewelry, and more. Patients, visitors, and staff across UHN are all welcome.

Speaker: Dr. Majid Mirmehdi, a Professor of Computer Vision in the Department of Computer Science at the University of Bristol

Health Assessment at Home: Objective Real-World Measurement of Parkinson’s disease Symptoms

Speaker: Dr. Sukhvinder Kalsi-Ryan

Dr. Albin T. Jousse Lecture Series: Perspectives of Recovery and Restoration of the Upper Limb after Traumatic Cervical Spinal Cord Injury

SOAR: Showcasing Outstanding Achievement in Research

Please save the date for SOAR: Showcasing Outstanding Achievement in Research at the KITE Research Institute! SOAR celebrates the vibrant trainee community at KITE by bringing together trainees and scientists in a supportive space to share research, exchange ideas, and receive meaningful feedback. Through mentorship, learning, and networking opportunities, the day strengthens connections and fosters collaboration within the KITE community.